Team Members: Dr. Liu Liu, Dr. Gladia Hotan, Markus Yeo

About This Project

Whenever students get stuck on a programming problem, an AI tutor is now always within reach. But what do they actually ask it, and does asking actually help them learn? This project investigates how students seek help from AI tutors in NUS’s introductory programming courses, drawing on detailed interaction logs from Coursemology, the AICET-maintained learning platform powering these courses.

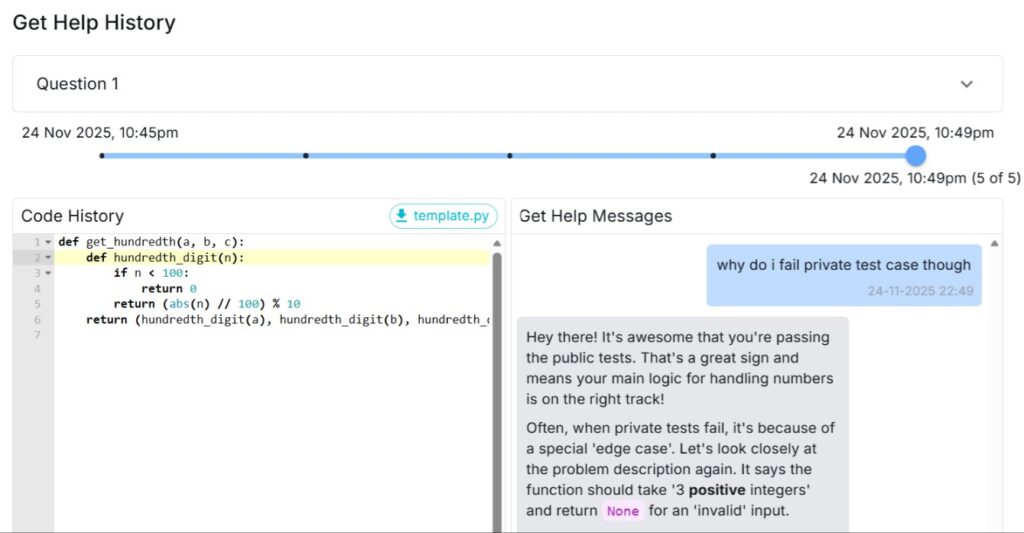

The questions we pursue are deceptively simple. Do students ask the kinds of questions that move them toward genuine understanding, or do they default to whatever takes the least effort to type? When the AI’s reply doesn’t quite land, do students reformulate the problem, or get caught in repetitive cycles? Does experience with the system gradually make students better askers, or are early habits stickier than that?

To answer these, we build a multi-dimensional analytical framework that combines a fine-grained taxonomy of student help-seeking intentions with a measurable proxy for learning progress.

We use Abstract Syntax Tree (AST) edit distance between a student’s current code and a correct solution as that proxy: by measuring the structural gap before and after each AI interaction, and adjusting for individual baselines, we can begin to see not just whether help-seeking helps, but which kinds help, for whom, and when.

These questions matter because the easy assumptions about AI in education don’t always survive close contact with what students actually do. The most consequential design choice for the next generation of AI tutors may not be the model behind them, but the system that surrounds them: one that teaches students how to ask, distinguishing AI that scaffolds real learning from AI that merely offloads the cognitive work that learning requires.

Research Questions

Methods

Learning Analytics

Large-Scale Interaction Log Analysis

LLM-Based Message Classification

Mixed-Methods Analysis

Key Contributions

This project makes three intertwined contributions. Empirically, it offers a large-scale, fine-grained account of how undergraduate students seek help from AI tutors over a full semester, surfacing patterns that smaller studies and self-report measures cannot reach. Methodologically, it develops a multi-dimensional analytical framework that links a help-seeking taxonomy to code-based proxies for learning progress, including AST edit distance and student-adjusted effect estimates, providing a replicable pipeline for evaluating AI-mediated learning at scale. Pedagogically and practically, it lays the foundation for design principles and instructional strategies that help students learn to ask, not just learn to type.