Team Members: Dr. Liu Liu, Dr. Gladia Hotan, Olivia, Marcus Yeo

About This Project

Universities are now full of students using Large Language Models (LLMs), to draft and debug, summarize and explore, sometimes to think through problems they once would have wrestled with on their own. How students actually integrate these tools into their academic lives, and how those patterns shift as their relationship with AI matures, is a question that universities have begun to ask but that empirical research has barely begun to answer. This project sets out to answer it not as a snapshot, but as a process unfolding across the arc of a degree.

Conducted across the NUS School of Computing, the NUS Faculty of Arts and Social Sciences, and NUS College, the project is longitudinal and deliberately cross-disciplinary. A computer science student debugging Python and a humanities student drafting an essay encounter very different versions of the same technology, and a serious account of student LLM use cannot generalize from one to the other. By following students across these faculties over time, the project traces how initial adoption gives way to settled patterns of use, and how those patterns reshape the way students read, write, and reason.

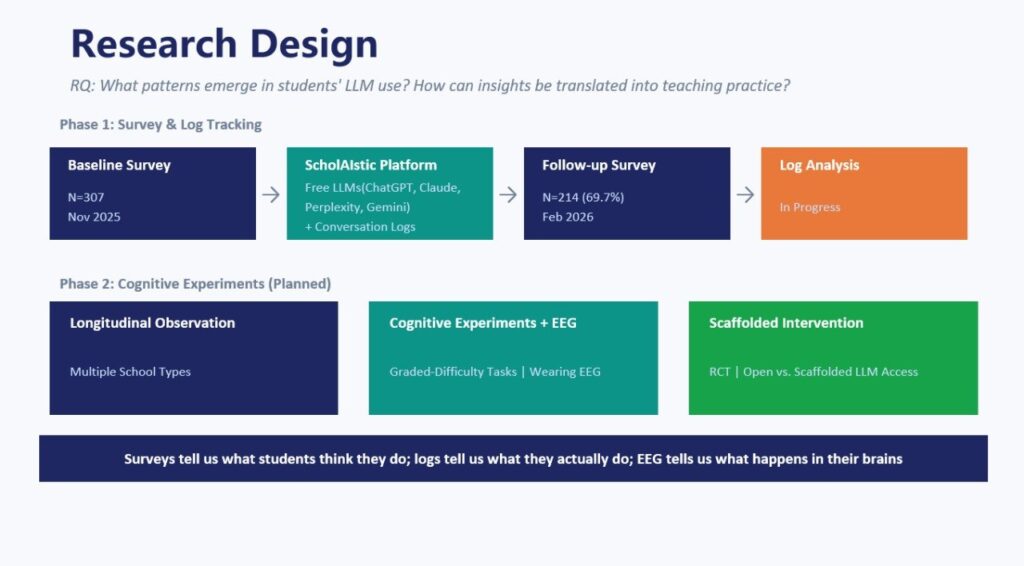

Methodologically, this research project is built around a dual-stream design. Periodic surveys capture students’ self-reported usage, attitudes, perceived risks, and epistemic habits, while conversation logs collected through ScholAIstic, AICET’s purpose-built learning platform, record what students actually do when they sit down with an AI assistant. Holding these two streams alongside each other, the project moves past what students say about their AI use to examine the questions they pose, the way they frame their requests, and whether they engage critically or accept what they are given.

This combination of longitudinal scope, cross-disciplinary breadth, and behavioural realism puts this project among the few studies positioned to track how a generation of students is learning to live with AI, and how that learning is, in turn, reshaping what it means to study, to write, and to know.

Research Questions

Methods

Periodic Survey Instruments

Cross-Faculty Comparison

Longitudinal Mixed-Methods Research

Educational Data Mining

Key Contributions

This project makes three intertwined contributions. Empirically, it offers one of the few longitudinal, cross-faculty accounts of how university students actually use large language models across the arc of a degree, surfacing patterns of adoption, adaptation, and entrenchment that cross-sectional studies cannot reach. Methodologically, it develops a dual-stream research design that holds students’ self-reported AI practices alongside their observed conversational behaviour, providing a replicable framework for studying a say-do gap that single-method approaches routinely overlook. Theoretically and practically, it lays the groundwork for understanding how the steady integration of AI is reshaping core academic practices, with implications for curriculum, AI literacy, and institutional policy in an era when these decisions are still being made largely without evidence.